Shipped · In personal use

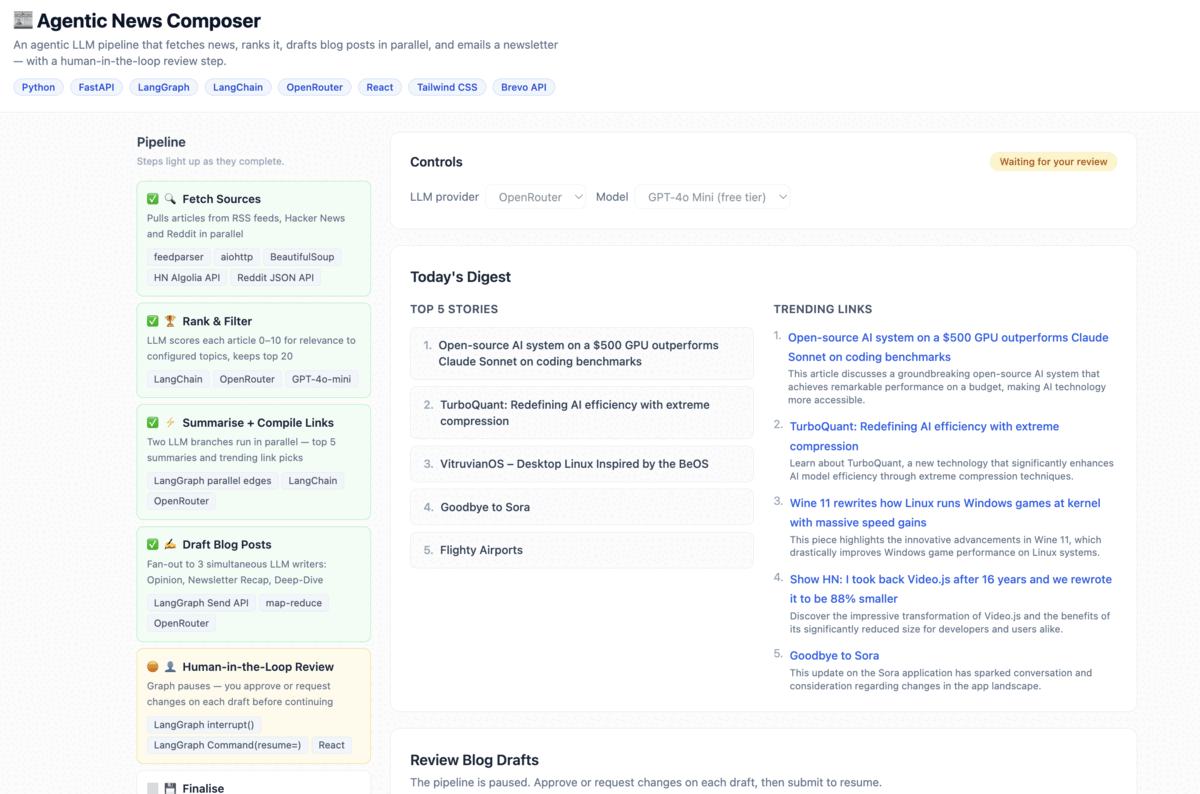

Agentic News Composer is a personal content tool I built to solve a daily

friction point: staying on top of the tech news landscape and turning relevant

stories into structured blog content takes hours of manual work. This pipeline

does it in minutes.

It runs every morning, collects and ranks the day's top stories, then drafts

three different blog posts in parallel — each with a distinct voice. Before

anything is saved, I review and approve or revise each draft directly in the UI.

Only when I'm happy does the pipeline finalise the output to a dated Markdown file.

Keeping up with tech news is a full-time job

Staying current in AI and product development means monitoring dozens of sources

daily — RSS feeds, Hacker News, Reddit, newsletters. Reading everything isn't

feasible. Skimming leads to missed context. And even when you find the right

stories, turning them into shareable content requires significant time and mental

energy to shift into writing mode.

Existing tools either aggregate (but don't write) or write (but don't let you

steer the output or review before publishing). There was no tool that connected

the full loop from source collection to human-approved draft.

An agentic pipeline with a human in the loop

The system is a LangGraph StateGraph that handles the full workflow

end-to-end. It fetches sources concurrently, uses an LLM to rank and filter

stories by relevance to configurable topic keywords, summarises the top five,

then fans out to three parallel draft-writing agents — each writing in a

different style (Opinion, Newsletter Recap, Deep Dive).

The graph then pauses via interrupt() to surface all three

drafts in the Streamlit UI. I can approve each one or flag it with revision

notes. Only the flagged drafts get regenerated — approved ones are preserved.

The loop repeats until everything is approved, then the digest is written to disk.

The review UI in action

The Streamlit interface surfaces all three drafts side by side after the pipeline runs. Each draft can be approved or flagged with revision notes before the graph continues.

What it's built with and why

| Technology |

Role & rationale |

| LangGraph |

Orchestrates the full agentic pipeline as a typed StateGraph with parallel edges, conditional routing, interrupt/resume, and checkpointing. Chosen over a simple chain because the revision loop and fan-out pattern require proper graph semantics. |

| LangChain / ChatOpenAI |

LLM calls routed via OpenRouter, making the model swappable via a single environment variable without changing any application code. |

| Streamlit |

UI layer for the human review step. Session state persists across the interrupt/resume cycle; live progress is surfaced via st.status(). |

| SQLite (SqliteSaver) |

Checkpoints the graph state to disk so a process restart mid-run doesn't lose progress. Each run is isolated by a UUID thread ID. |

| asyncio / aiohttp |

Concurrent fetching of all news sources inside a single LangGraph node, keeping the fetch step fast regardless of source count. |

| feedparser + BeautifulSoup |

RSS/Atom ingestion and HTML scraping for sources that don't provide structured feeds. |

Shipped and in daily use

The pipeline runs reliably and I use it every morning. The core loop —

fetch, rank, draft, review, finalise — works end-to-end with full state

persistence across restarts.

Add a web-based publishing step to push approved drafts directly to a CMS or newsletter platform.

Introduce observability with Langfuse to trace LLM calls, monitor token usage, and run evals on draft quality over time.

Replace the configurable keyword ranking with a personalisation layer that learns from approval history.

Package as a scheduled job (cron + headless mode) so it runs automatically without manual trigger.